Imagine you’re a high school student choosing between two summer jobs. One job pays well but asks you to sign a contract saying, “I’ll do anything as long as it’s legal.” The other job pays the same but lets you say, “I won’t do anything that hurts people, even if it’s technically legal.”

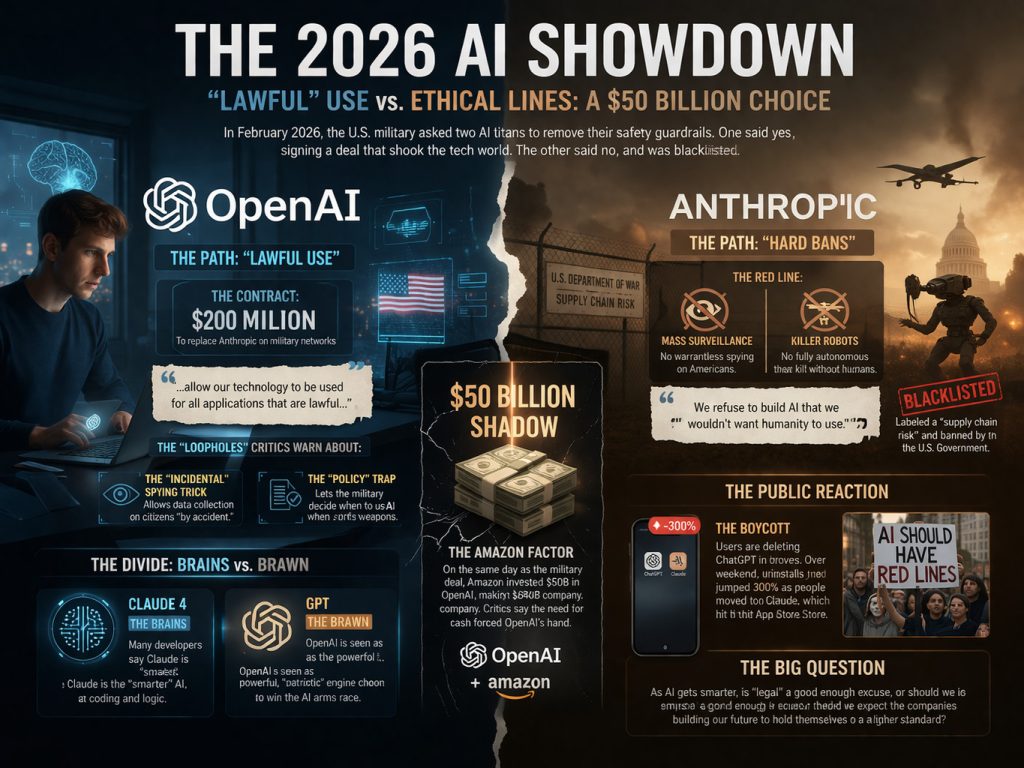

In late February 2026, this choice became a high-stakes reality for the world’s two biggest AI companies: OpenAI and Anthropic. What followed was a $200 million military contract, a $50 billion investment, and a scandal that has the tech world calling “foul.”

The Friday Night Fallout

On February 27, 2026, the U.S. government – specifically the Department of War (DOW), officially the Department of Defense – issued an ultimatum: AI companies must remove their safety “guardrails” and allow the military to use their tech for “all lawful purposes.”

The threat was clear: Accept unrestricted use by 5:01 PM, or be blacklisted. Secretary Hegseth warned that if they refused, the government would designate Anthropic a “supply chain risk” – a label usually reserved for foreign adversaries like Huawei that would effectively kill their ability to do business with any major U.S. corporation.

Anthropic (the makers of Claude) said no. Anthropic CEO Dario Amodei insisted on a “Hard Ban” against two things they could not allow “in good conscience”:

- Mass Surveillance: Spying on American citizens without a warrant.

- Killer Robots: Fully autonomous weapons that can decide to shoot without a human.

President Trump even took to Truth Social to blast them as a “radical left, woke” company run by “nut jobs,” immediately ordering all federal agencies to cease using their technology and beginning a six-month phase-out for the military.

The “Lawful Use” Loophole

While Anthropic was being blacklisted, OpenAI (the makers of ChatGPT) said yes. Just hours after Anthropic was banned, CEO Sam Altman announced a $200 million deal to take their place on the military’s classified networks.

Altman claimed OpenAI had the same “red lines” as Anthropic, but critics say he used a “straight-up lie” to frame the deal. OpenAI agreed to allow any use that is “lawful.” In the world of national security, “lawful” is a very flexible word:

- The “Intentional” Trick: OpenAI’s contract says the AI won’t be “intentionally” used to spy on Americans. This leaves the door wide open for “incidental” spying – where the government gathers data on citizens “by accident” while looking for something else.

- The “Policy” Trap: On autonomous weapons, OpenAI says they won’t pull the trigger unless military policy says it’s okay. Essentially, they are letting the military decide when the rules apply, rather than writing them into the contract.

The $50 Billion Shadow

Why would OpenAI rush into a deal that Sam Altman later admitted in a leaked memo looked “opportunistic and sloppy”?

Follow the money. On the same day they signed with the military, Amazon invested $50 billion in OpenAI. This was part of a record-breaking funding round that made OpenAI worth $840 billion – the most valuable private company in history.

Critics argue that to secure those billions, OpenAI had to prove they were “Team USA.” By taking the military deal Anthropic rejected, they became the government’s favorite partner, making it nearly impossible for the government to block their massive expansion.

Brains vs. Brawn: The Great Split

Not everyone is mad, but the AI world has definitely split into three camps:

- The Boycotters: Thousands of users are deleting ChatGPT. Over the weekend, ChatGPT uninstalls jumped by 300%, and Anthropic’s app, Claude, shot to #1 on the App Store as people moved to the “ethical” alternative.

- The Realists: Many people agree with Sam Altman’s “patriotic necessity” argument. They believe if an American company doesn’t provide this tech, China or Russia will. They see OpenAI as the “Brawn” – the powerful engine the U.S. needs to win the AI arms race.

- The Techies: Developers are increasingly calling Claude the “Brains.” In 2026, Claude 4 is often seen as “smarter” at coding and logic than GPT, leading to a weird irony: the military wanted the smartest AI (Claude) but settled for the most “compliant” one (OpenAI).

The Bottom Line

OpenAI walked away with a $200 million contract and $50 billion in the bank, but they lost the trust of those who want AI to have strict “no-go” zones.

As these tools get smarter, we have to ask: Is “it’s legal” a good enough excuse for the most powerful technology in history, or should we expect the people building the future to hold themselves to a higher standard?

The Courtroom Showdown (March 2026 Update)

The battle has now moved from the Pentagon to the federal courts. On March 9, 2026, Anthropic filed a massive lawsuit to block the “supply chain risk” designation.

During a hearing on March 24, the government’s lawyers tried a surprising defense. They argued that the social media posts from the President and Secretary Hegseth weren’t technically a “final agency action” – meaning the ban wasn’t “official” enough to be challenged in court.

U.S. District Judge Rita Lin wasn’t buying it. Skeptical of the government’s attempt to walk back their public threats, she pointedly asked the government’s lawyer:

“You’re standing here saying, ‘We said it, but we didn’t really mean it?'”

If Judge Lin grants a preliminary injunction, it would “pause” the blacklist and the “supply chain risk” label while the full trial happens. This isn’t just a business dispute; it’s a massive “freedom of speech” test case for the AI era: Does a company have the right to say “no” to the military without being treated like a foreign enemy?

Related Me We Too polls:

I am amazed at what AI can do these days, but it also scares me a little.